Introduction – The Crisis Of Digital Authenticity In A Programmable Reality

The transition from the Indian Evidence Act (IEA), 1872, to the Bharatiya Sakshya Adhiniyam (BSA), 2023, represents India’s official acknowledgement of the digital epoch. By elevating electronic records to the status of primary evidence under Section 57, the legislature has modernised the “form” of evidence. However, the “substance” of evidence remains under existential threat.

We are entering an era of Synthetically Generated Information (SGI), where generative AI can fabricate high-fidelity audio-visual records that leave no traditional forensic footprint.

The current legal framework, specifically Section 63 of the BSA, operates on a 19th-century “Custodial Trust” model. It presumes that if a human signs a certificate stating a device was operational, the data within that device is authentic. This is a fatal fallacy in 2026.

A “wealthy coder”—a litigant with the capital to deploy advanced AI—can now bypass the judicial process by presenting a “perfect lie” that satisfies all procedural requirements of Section 63 while being fundamentally forged.

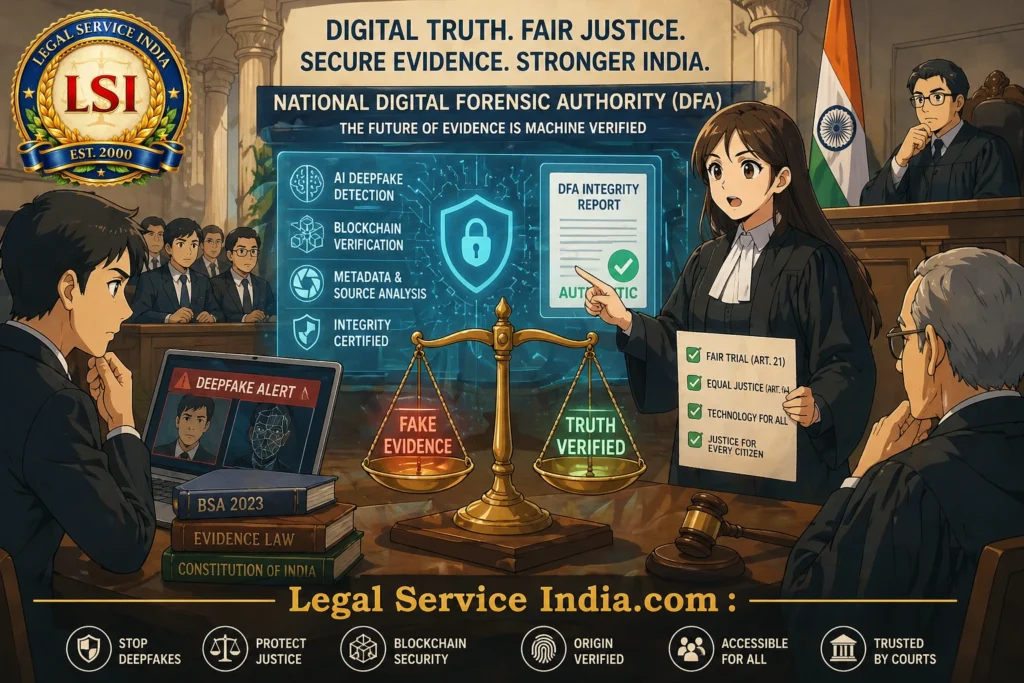

Need For A National Digital Forensic Authority (DFA)

- Safeguards the right to a fair trial under Article 21

- Shifts from procedural certification to technical validation

- Acts as a social justice equaliser

- Provides blockchain-verified integrity reports

- Eliminates prolonged admissibility disputes

- Enables real-time verification in courts

By providing government-backed, blockchain-verified integrity reports, the DFA ensures that a poor litigant’s truth is not silenced by a wealthy opponent’s high-tech forgery.

Statutory And Legislative Framework – Section 63 Of The BSA As A “Paper Shield”

The transition to the Bharatiya Sakshya Adhiniyam (BSA), 2023, was intended to modernise the law of evidence. However, reliance on Section 63 BSA (formerly Section 65B of the IEA) has created a “paper shield” that is increasingly porous when confronted with sophisticated forgeries.

2.1 The Section 63 Paradox: Procedural Vs. Ontological Truth

- Requirement: Certificate confirming proper device operation

- Focus: Post-capture integrity only

- Gap: Ignores origin of content

The “Born-Digital” Forgery Problem

In the era of high-fidelity generative AI, a forgery is “born digital”. If a deepfake is generated on a workstation and transferred to a smartphone, the Section 63 certificate remains technically accurate, yet the evidence is fabricated.

Outcome: The law verifies the container while ignoring the content.

2.2 Doctrine Of Proportionality And Evidentiary Reform

- Burden Of Proof: Falls entirely on the opposing party

- Issue: High cost of private forensic experts

- Impact: Financial injustice for common litigants

Under the doctrine of proportionality, a legal standard imposing a recurring burden despite available technical alternatives must be reformed.

Solution: DFA as a state-backed, cost-effective alternative.

2.3 Constitutional Mandates: Article 14 And Article 21

- Article 14: Prevents unequal access to justice

- Article 21: Guarantees fair trial rights

A system that allows synthetic lies as primary evidence fails the test of “procedure established by law”.

Chasing Digital Truth Globally – The “Expert Gatekeeper” Paradigm

Digital authenticity has become a global judicial crisis. As synthetic media becomes indistinguishable from reality, legal systems are evolving rapidly.

3.1 Global Legislative Shifts (2026)

United States: FRE 707 (2026)

- Based on Daubert Standard (FRE 702)

- Introduces reliability benchmarks for AI

- Requires expensive expert testimony

European Union: EU AI Act

- Mandates machine-readable deepfake labeling

- Effective against compliant corporations

- Ineffective against open-source/jailbroken AI

China: Cybersecurity Law (2026)

- Uses blockchain for verification

- Reactive model

- Vulnerability window before hashing

3.2 Comparative Analysis Of Digital Evidence Models

| Feature | United States | European Union | China | India (DFA Proposal) |

|---|---|---|---|---|

| Primary Law | FRE 702 / 707 | EU AI Act | Cybersecurity Law | National DFA Framework |

| Trust Model | Expert Testimony | Manufacturer Trust | Reactive Trust | State-Validated |

| Mechanism | Post-Facto Audit | Watermarking | Post-Capture Hashing | AI + Blockchain Validation |

| Evidence Status | Subjective | Compliance-Based | Procedural | Machine Evidence |

| Trial Speed | Low | Moderate | High | Ultra-High |

| Vulnerability | High Cost | Bypass Risk | Time Gap | Secures Origin |

3.3 The “Machine Evidence” Doctrine

- Mandatory Validation: All digital evidence must pass DFA

- Technology Stack:

- AI artefact detection

- Blockchain anchoring

- Sensor noise analysis

Once validated, evidence is no longer a human claim—it becomes “machine evidence”.

This creates a legal presumption of fact, shifting the burden of proof and eliminating admissibility debates.

Courts can then focus purely on the merits of the case.

Section 4: Operational Framework And Core Activities Of The DFA

The National Digital Forensic Authority (DFA) functions as the centralised “technical engine” of the judiciary. Its operations are designed to transition the legal system from a reliance on human testimony to a reliance on verified mathematical truth.

4.1 Mandatory Pre-Admissibility Validation (The Expert Gatekeeper)

- The Validation Filter: No digital record—whether a screenshot, a 4K video, or a satellite image—can be admitted as primary evidence without a DFA Integrity Report.

- The Machine Evidence Certification: The DFA issues a certificate that elevates a record to the status of “Machine Evidence”. This reduces the court’s burden, as the technical validity of the file is no longer a matter of debate but a state-certified fact.

4.2 AI-Ensemble Auditing And Deepfake Detection

- Artefact Analysis: The agency scans for “Generative Noise” and microscopic inconsistencies in lighting, shadows, and frame-rate interpolation typical of AI models like Sora or Kling.

- Biometric Liveness Checks: In cases involving video evidence of individuals, the DFA uses rPPG (Remote Photoplethysmography) to detect blood flow patterns in the face. If the “pulse” is mathematically absent, the video is flagged as a deepfake.

4.3 Immutable Anchoring On The National Evidence Blockchain

- Cryptographic Hashing: Every file processed by the DFA is assigned a unique SHA-256 hash.

- Permanence: This hash is stored on a permissioned national blockchain, preventing any alteration of evidence after validation.

4.4 Hardware Attestation And Sensor Noise Analysis

- PRNU (Photo-Response Non-Uniformity): Every camera sensor leaves a unique “noise fingerprint” on a video.

- TEE Validation: Confirms that the data originated from the actual device lens and not a simulated environment.

4.5 Metadata Forensics And Sovereign Watermarking

- Forensic Extraction: Verifies GPS coordinates, timestamps, and network pings.

- Active Watermarking: Embeds a forensic watermark for instant verification.

4.6 Dynamic Intelligence & Periodic Protocol Updates

- AI DNA Registry: Maintains signatures from major AI models.

- Quarterly Intelligence Upgrades: Updates detection algorithms every 90 days.

4.7 Social Justice: Maintains A Mobile App As A Secondary Equalizer

4.7.1 Neutralizing The Hardware Privilege

- The Legacy Gap: Budget devices lack high-level cryptographic signatures.

- The DFA Solution: The DFA-Sakshya app equalises trust across all devices.

4.7.2 Democratic Hashing: Bypassing The “Window Of Vulnerability”

- Millisecond Integrity: Data is hashed instantly at capture.

- State-Backed Custody: Evidence is secured on blockchain immediately.

4.7.3 Equalizing The Field: Truth As A Public Utility

- The “Sovereign Seal”: Ensures equal evidentiary weight.

- Eliminating Expert Costs: Provides free state-backed validation.

Section 5: The Judicial Impact – Efficiency, Equity, And The Doctrine Of Technical Finality

The implementation of the National Digital Forensic Authority (DFA) marks a paradigm shift in Indian jurisprudence. It strengthens the framework of 2023 by ensuring evidentiary certainty.

5.1 Neutralizing The “Wealthy Coder” And Technical Asymmetry

- In Efficacy Of Self-Certification: Section 63 cannot detect AI-generated evidence.

- Weaponising The “Liar’s Dividend”: Experts create doubt to delay justice.

- Economic Barrier To Truth: High forensic costs restrict access to justice.

5.2 The Courtroom Accelerator

- Doctrine Of Technical Finality: DFA certification removes authenticity disputes.

- Procedural Efficiency: Courts focus only on substantive issues.

5.3 Radical Backlog Reduction

| Aspect | Impact |

|---|---|

| Pre-Trial Streamlining | Technical validation completed before trial |

| Efficiency Gain | 75–80% reduction in delays |

| Case Clearance | Faster disposal of millions of cases |

5.4 Deterrence And The Digital Mirror Effect

- End Of Speculative Forgery: Advanced audits discourage manipulation.

- Predictable Justice: Blockchain-backed evidence ensures certainty.

5.5 Conclusion: The Sovereign Custodian Of Truth

- Truth is deterministic

- Justice is rapid

- Equality is absolute

6. Conclusion: Securing The Future Of The Indian Courtroom

As we move deeper into 2026, reliance on Section 63 BSA exposes vulnerabilities in a deepfake-driven world. The National Digital Forensic Authority (DFA) is the solution.

Key Benefits Of DFA

| Feature | Impact |

|---|---|

| Technical Finality | Ends expert disputes |

| Judicial Acceleration | 75–80% efficiency gain |

| Social Equity | Equal evidentiary power for all citizens |

The DFA is not merely a technical upgrade; it is a sovereign custodian of truth, ensuring justice becomes a mathematical certainty in the age of AI.