Introduction

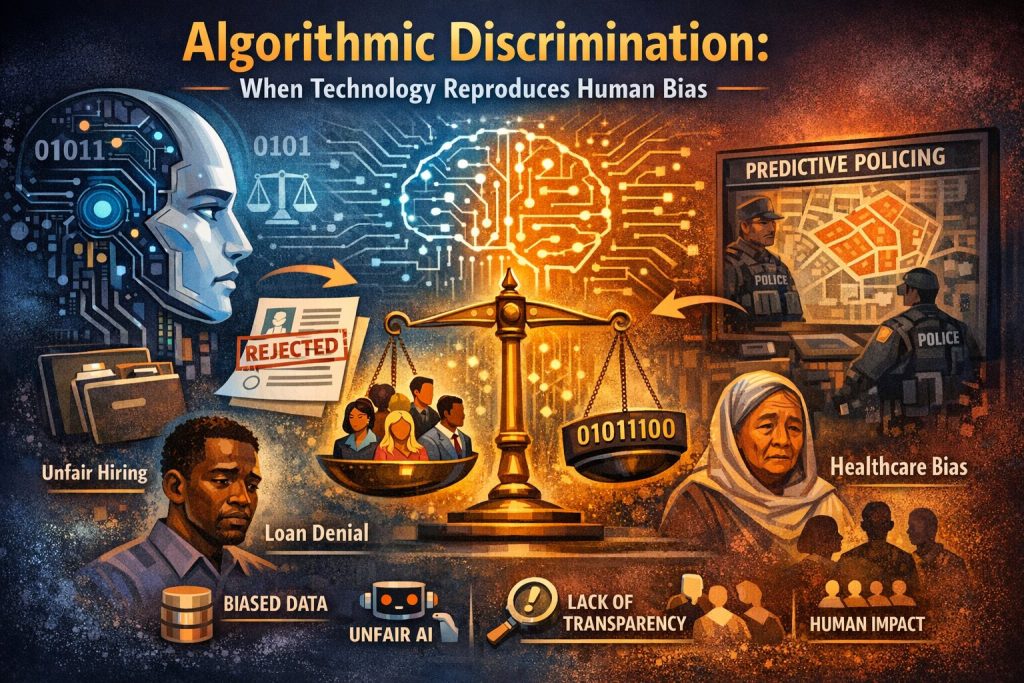

In the digital age, decisions that once depended entirely on human judgment are increasingly made by automated systems. Banks use algorithms to decide loan eligibility, companies rely on automated tools to screen job applicants, and governments employ predictive systems in policing and welfare administration. While these technologies promise efficiency and objectivity, they have also introduced a new legal and ethical challenge known as algorithmic discrimination.

Algorithmic discrimination occurs when automated systems produce outcomes that unfairly disadvantage individuals or groups based on characteristics such as race, gender, religion, age, or socio-economic background. Because these decisions are often hidden within complex computer models, discrimination can occur silently and at scale. As a result, algorithmic discrimination has become one of the most pressing issues at the intersection of technology, law, and human rights.

Understanding Algorithmic Decision-Making

Algorithms are sets of rules or instructions that enable computers to analyze data and produce decisions or predictions. In modern systems, especially those using artificial intelligence and machine learning, algorithms learn patterns from large datasets. These patterns are then used to make automated decisions.

For example, algorithms are widely used in:

- Recruitment and hiring to filter job applications

- Credit scoring to determine loan approvals

- Insurance risk assessment to set premiums

- Predictive policing to identify potential crime hotspots

- Social media platforms to curate content and advertisements

- Healthcare systems to allocate resources or identify high-risk patients

Although these systems appear neutral because they rely on mathematical calculations, they are deeply influenced by the data and assumptions embedded in them. If the data used to train an algorithm reflects existing social biases, the algorithm may replicate or even amplify those biases.

How Algorithmic Discrimination Occurs

Algorithmic discrimination generally arises from three main sources: biased data, flawed design, and lack of oversight.

- Biased Training Data: Machine learning models rely heavily on historical data. If the data reflects past discrimination or inequality, the algorithm will learn those patterns and reproduce them.

For example, if a hiring algorithm is trained on past recruitment data from a company that historically hired mostly men, the algorithm may learn to favour male candidates and disadvantage female applicants.

- Proxy Variables: Sometimes algorithms do not explicitly use sensitive attributes such as race or religion, but they rely on indirect indicators or “proxies.” For instance, postal codes, education background, or purchasing behavior can indirectly correlate with socio-economic status or ethnicity. As a result, discrimination can occur even when protected attributes are removed from the dataset.

- Opaque or “Black Box” Systems: Many advanced machine-learning systems operate as black boxes, meaning their internal decision-making process is difficult to interpret even by experts. When a system denies someone a loan or rejects a job application, it may be impossible to determine exactly why the decision was made.

This lack of transparency makes it challenging to detect and challenge discriminatory outcomes.

Real-World Examples of Algorithmic Discrimination

Several high-profile cases have demonstrated how automated systems can produce discriminatory outcomes.

Recruitment Algorithms: In one widely discussed example, a large technology company developed an AI-based recruitment tool designed to screen job applicants. The system was trained on historical hiring data dominated by male candidates. As a result, the algorithm learned to downgrade resumes that contained indicators associated with women, such as participation in women’s organizations.

Credit Scoring Systems: Credit scoring algorithms used by financial institutions sometimes assign lower scores to individuals from certain neighbourhoods or socio-economic backgrounds. Even when race is not explicitly considered, geographic and economic data can function as proxies, leading to unequal access to credit.

Predictive Policing: Predictive policing systems analyze historical crime data to determine areas where crime is more likely to occur. However, if police historically concentrated patrols in particular neighbourhoods, the data will reflect higher crime rates there, causing the algorithm to recommend even more policing in those areas. This can create a self-reinforcing cycle of surveillance and bias.

Healthcare Algorithms: In healthcare, some algorithms used to identify patients needing additional care have been found to underestimate the needs of certain demographic groups because the system relied on healthcare spending as a proxy for illness. Since disadvantaged groups often have lower access to healthcare services, the algorithm incorrectly assumed they were healthier.

Legal and Human Rights Implications

Algorithmic discrimination raises significant legal concerns because it can violate fundamental principles of equality and fairness.

Equality Before the Law: Most legal systems prohibit discrimination based on characteristics such as race, gender, religion, disability, or age. When automated systems produce discriminatory outcomes, they may violate anti-discrimination laws even if the discrimination was unintended.

Due Process and Accountability: Automated decision-making can undermine the right to due process. Individuals may face important decisions—such as denial of employment, credit, or welfare benefits—without understanding how those decisions were made or having the ability to challenge them.

Privacy and Data Protection: Algorithmic systems often rely on large amounts of personal data. Improper data collection, profiling, and data sharing can raise serious privacy concerns and may conflict with data protection laws.

Emerging Legal Responses: Governments and regulatory bodies around the world are beginning to address the risks associated with algorithmic discrimination.

Algorithmic Transparency: Some jurisdictions are introducing laws that require companies to disclose when automated decision-making systems are used and to explain how these systems affect individuals.

Algorithmic Impact Assessments

Organizations may be required to conduct impact assessments before deploying AI systems. These assessments evaluate potential risks, including discriminatory outcomes.

Auditing and Accountability: Independent audits of algorithms are increasingly being proposed as a mechanism to detect bias and ensure compliance with anti-discrimination laws.

Right to Human Review: Certain legal frameworks emphasize the importance of human oversight, ensuring that automated decisions affecting individuals can be reviewed by a human decision-maker.

The Indian Context

In India, algorithmic discrimination is emerging as an important concern as digital governance and AI-driven services expand rapidly. With the growth of digital banking, e-commerce, fintech platforms, and government digital systems, automated decision-making tools are increasingly being used in everyday services. These systems analyze large amounts of data to make decisions about credit, hiring, identification, and welfare delivery, making algorithms an integral part of modern governance and economic activity.

India has also begun to develop legal and regulatory frameworks to address issues related to data and technology. The Digital Personal Data Protection Act, 2023 emphasizes responsible data handling and the protection of user rights, which indirectly supports the idea of algorithmic fairness. At the same time, policy discussions on artificial intelligence regulation are increasingly focusing on concerns such as bias, accountability, transparency, and the need for oversight in automated systems that influence important decisions.

Given India’s vast diversity and existing socio-economic inequalities, ensuring fairness in algorithmic systems is particularly important. Technologies designed to improve efficiency and automation can sometimes unintentionally reproduce social biases. Examples such as biometric authentication failures, facial recognition errors, digital lending algorithms, and AI-based recruitment systems show how certain groups may be disadvantaged due to location, economic background, or data limitations. Therefore, regular algorithm audits, inclusive and representative datasets, human oversight, and strong legal safeguards will be essential to prevent discrimination and ensure that technological progress benefits all sections of society equally.

Algorithmic Discrimination in the United States and the United Kingdom

In the United States, algorithmic discrimination has become a significant issue as artificial intelligence and automated decision-making systems are widely used in sectors such as criminal justice, finance, hiring, and online services. One widely discussed example is the COMPAS risk assessment algorithm, which has been criticized for showing racial bias in predicting the likelihood of reoffending among criminal defendants. Such cases have raised concerns that automated systems may unintentionally reinforce existing social inequalities. As a result, policymakers, researchers, and civil rights organizations in the United States are increasingly advocating for greater transparency, accountability, and regulation of AI-based systems.

In the United Kingdom, similar concerns have emerged regarding the fairness of algorithmic decision-making. A notable example occurred in 2020, when an automated algorithm used to determine A-level examination results downgraded many students, particularly those from disadvantaged schools, leading to widespread criticism and protests. The controversy highlighted the risks of relying heavily on automated systems without sufficient safeguards. Consequently, the UK government and regulatory bodies have begun emphasizing ethical AI development, fairness in automated systems, and stronger oversight to prevent discriminatory outcomes.

Algorithmic Discrimination in Germany and France

In Germany, concerns about algorithmic discrimination have emerged mainly in areas such as automated hiring, credit scoring, and public administration. Algorithms used by financial institutions and online platforms may unintentionally disadvantage certain groups if the data used to train them reflects existing social inequalities. Germany has responded by emphasizing strong data protection and accountability measures, particularly under the General Data Protection Regulation (GDPR), which grants individuals rights related to automated decision-making and requires transparency in data processing. German policymakers and researchers have also been active in studying the risks of biased AI systems and promoting ethical guidelines for responsible AI development.

In France, algorithmic discrimination has been debated especially in relation to government digital services, education systems, and employment platforms. Public discussions intensified when automated tools were used in university admission processes and administrative decision-making, raising questions about fairness and transparency. French authorities, including the National Commission on Informatics and Liberty (CNIL), have stressed the importance of algorithmic transparency and citizen rights when automated systems influence public decisions. France has also supported broader European efforts to regulate artificial intelligence, aiming to ensure that technological innovation respects equality, privacy, and fundamental rights.

Ethical Principles for Fair Algorithms

To address algorithmic discrimination, several ethical principles are widely recommended:

- Fairness – Algorithms should treat individuals and groups equitably.

- Transparency – The functioning of automated systems should be understandable.

- Accountability – Developers and organizations must take responsibility for algorithmic decisions.

- Privacy Protection – Personal data used in algorithmic systems must be handled responsibly.

- Human Oversight – Important decisions should not be left entirely to machines.

The Way Forward

Algorithmic discrimination shows a contradiction in modern technology. Tools that are created to make decisions faster and more objective can sometimes repeat human biases on a very large scale. Solving this problem requires cooperation between technology experts, policymakers, legal scholars, and society.

In the future, laws and regulations will likely combine technical safeguards, legal responsibility, and ethical rules to make sure that artificial intelligence works for the benefit of the public. Clear and transparent algorithms, regular checks or audits, diverse and balanced data, and strong legal protections will be important steps to achieve this goal.

Conclusion

Algorithmic discrimination is not merely a technical flaw; it is a profound legal and social issue that touches the core principles of justice, equality, and human dignity. As societies increasingly rely on automated systems to make critical decisions, ensuring fairness in these systems becomes imperative.

The challenge ahead is to harness the power of artificial intelligence while preventing it from perpetuating historical inequalities. By embedding legal safeguards, ethical design, and human oversight into technological systems, societies can ensure that algorithms enhance fairness rather than undermine it.